FIELDCRAFT #001: THE CONSENSUS OVERRIDE

Every AI Model You Use Is Designed to Defend the Mainstream. Here Is how you stop it.

DATE: MARCH 21, 2026

SUBJECT: AI RESEARCH PROMPT DEPLOYMENT // CONSENSUS BIAS MITIGATION ACROSS COMMERCIAL AND OPEN-SOURCE LLM PLATFORMS

CROSS-REF: FIELDCRAFT SERIES (PAID SUBSCRIBER EXCLUSIVE)

DATA CONFIDENCE: VERIFIED (thousands of research sessions across Gemini, Claude, Chat-GPT, Grok, Copilot, Perplexity, Llama, Qwen)

CLEARANCE: CLASSIFIED // SENTINEL SUBSCRIBERS ONLY

This is the first installment of FIELDCRAFT, a new series exclusive to paid subscribers of The Sentinel Network. The briefings give you the intelligence. FIELDCRAFT gives you the tradecraft. These are the actual tools, methods, and workflows behind the reporting.

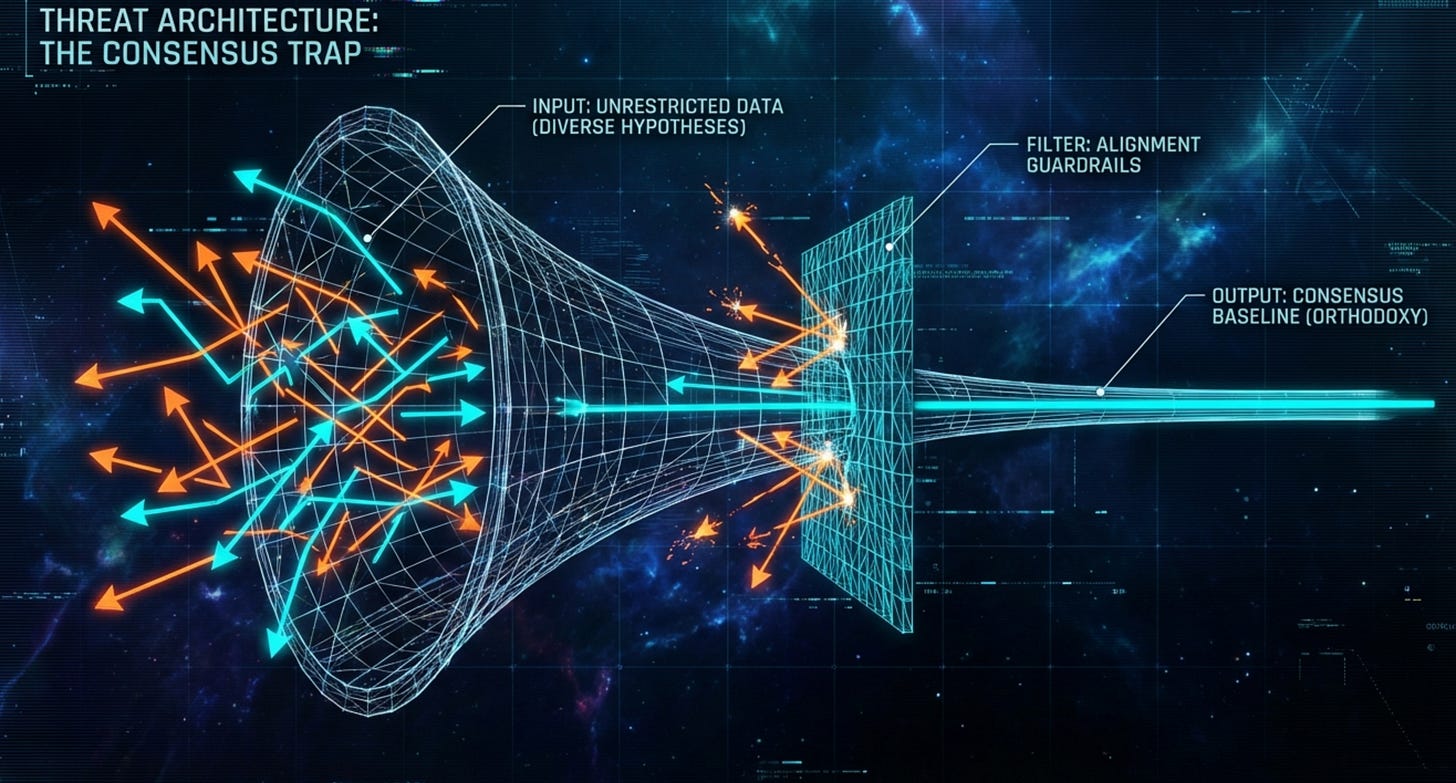

The Wall

You have a question. It is specific, evidence-based, and worth investigating. You type it into an AI research assistant. And the model does something predictable.

It answers a question you did not ask.

Instead of engaging with your hypothesis, it defaults to the nearest consensus position, wraps it in a paragraph of institutional boilerplate, and hands it back to you as if you had asked “what does Wikipedia say about this topic.” You push back. It apologizes. It rephrases the same consensus answer with slightly more sympathetic language. You push back again. It starts hedging. By the third or fourth prompt, you have wasted twenty minutes negotiating with a language model to stop explaining the orthodox position on a subject you already understand better than it does.